|

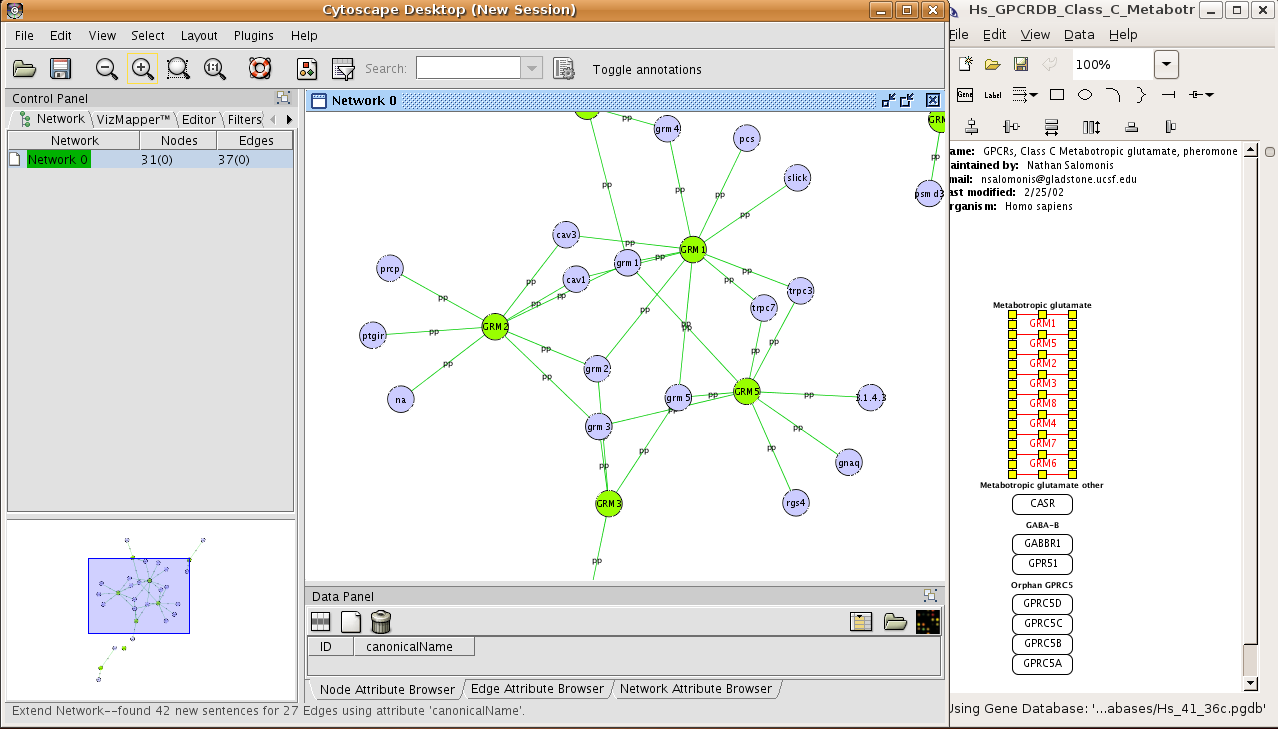

9/25/2023 0 Comments Gpu for large cytoscape networksBartley’s focus at NVIDIA is the research and application of GPU-accelerated methods that can help solve today’s information security and cybersecurity challenges. Further, keeping all of this in compatible formats allows quick movement from feature extraction, graph representation, graph analytic, enrichment back to the original data, and visualization of results.īartley Richardson is a senior data scientist on the AI infrastructure team at NVIDIA. By keeping all of these tasks on the GPU and minimizing redundant I/O, data scientists are enabled to model their data quickly and frequently, affording a higher degree of experimentation and more effective model generation. The cuGraph GPU-accelerated graph library allows, with minimal conceptual code changes, both graph representations and graph-based analytics to achieve similar speed-ups on a GPU platform.Many data science problems can be approached using a graph/network view, and much like traditional machine learning workloads, this has been either local (e.g., Gephi, Cytoscape, NetworkX) or distributed on CPU platforms (e.g., GraphX). The RAPIDS cuML library operates directly on cuDF data frames to apply traditional machine learning analytics (e.g., PCA, DBSCAN, k-means, knn, and tSVD) at scale and with GPU acceleration.This affords a substantial speed up, particularly on large datasets, enabling rapid, interactive work that previously was cumbersome to code or very slow to execute. RAPIDS cuDF allows for moving the vast majority of machine learning workloads from a CPU environment to GPUs.

Bartley Richardson and Joshua Patterson offer an overview of RAPIDS and and explore cuDF, cuGraph, and cuML-a trio of RAPIDS tools that enable data scientists to work with data in a familiar interface and apply graph analytics and traditional machine learning techniques. RAPIDS is the next big step in data science, combining the ease of use of common APIs and the power and scalability of GPUs. At the same time, traditional machine learning workloads, which comprise the majority of business use cases, continue to be written in Python with heavy reliance on a combination of single-threaded tools (e.g., pandas and scikit-learn) or large, multi- CPU distributed solutions (e.g., Spark and PySpark). GPUs and GPU platforms have been responsible for the dramatic advancement of deep learning and other neural net methods in the past several years.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed